95% of AI Projects Fail. Don’t Let Your Call Center Be One of Them.

By now, you’ve probably heard the stat: 95% of AI projects fail. It’s been splashed across headlines and whispered in boardrooms ever since MIT’s 2024 study on enterprise AI adoption found that the vast majority of pilots fizzle before delivering measurable business value (MIT Sloan, Windows Central, The AI Navigator).

That failure rate isn’t just academic. It’s a warning sign for executives under pressure to “do something with AI.” Boards are demanding results, employees are skeptical, and customers are unforgiving when half-baked solutions make their experience worse. Nowhere is this pressure more acute than in call centers, where AI has been sold as the silver bullet to reduce costs and transform customer experience.

The problem? Most call center AI projects don’t even make it out of the pilot phase. The technology may be powerful, but when the rollout is rushed, misaligned, or poorly integrated, the results are predictable: frustrated employees, wasted budgets, and a public failure that makes the next project even harder to sell.

But here’s the thing—failure isn’t inevitable. A small percentage of organizations are already proving AI can make call centers faster, smarter, and more resilient. The difference isn’t the tools they buy. It’s how they implement them.

This article will break down why so many call center AI projects fail, and more importantly, what you can do to ensure yours doesn’t.

The Real Reasons Behind the 95% Failure Rate

If we peel back the headlines, the real story behind AI’s 95% failure rate is that most projects collapse under the same set of avoidable mistakes. In call centers, the pressure to “do something with AI” often leads to rushed pilots, unclear success metrics, and cultural resistance long before the technology itself has a chance to prove value. To understand how not to become another cautionary tale, it’s worth starting with the most common—and most fatal—mistake: launching without a clear path to ROI.

1. No Clear ROI

Executives are under pressure to “do something with AI,” so projects often start for the wrong reasons: to appease a board, to follow competitors, or to run with a vendor’s shiny demo. But without a clear business case—shorter handle times, fewer escalations, lower attrition—pilots rarely connect to the P&L.

This is why so many projects stall out after the pilot phase. They look impressive in a slide deck, but when budget reviews come around, leaders ask the one question no one wants to answer: what value did this actually create? If the answer isn’t measurable, the project dies.

2. People and Culture Problems

AI transformation doesn’t happen in a vacuum. It happens through people—and too often, people are an afterthought.

Agents see AI as a threat to their jobs. Managers see it as a top-down initiative they weren’t consulted on. And executives underestimate how much training, communication, and cultural readiness is required for adoption. The result? Resistance, slow uptake, and even outright sabotage.

A recent survey by Boston Consulting Group found that less than 20% of frontline employees feel confident using AI in their day-to-day work. If your people don’t understand it, trust it, or see “what’s in it for them,” no amount of investment will make it stick.

3. Broken Plumbing (Integration + Data)

AI isn’t magic—it runs on infrastructure. And in call centers, that infrastructure is notoriously complex. CRMs, telephony systems, workforce management tools, QA software… if the AI solution doesn’t plug into them seamlessly, it creates more friction than it solves.

Then there’s the data problem. Call centers produce mountains of data, but much of it is siloed, messy, or incomplete. “Garbage in, garbage out” isn’t just a cliché—it’s the reality. Poor data hygiene leads to bots giving wrong answers, analytics missing the mark, and employees spending more time cleaning up after AI than doing their actual jobs.

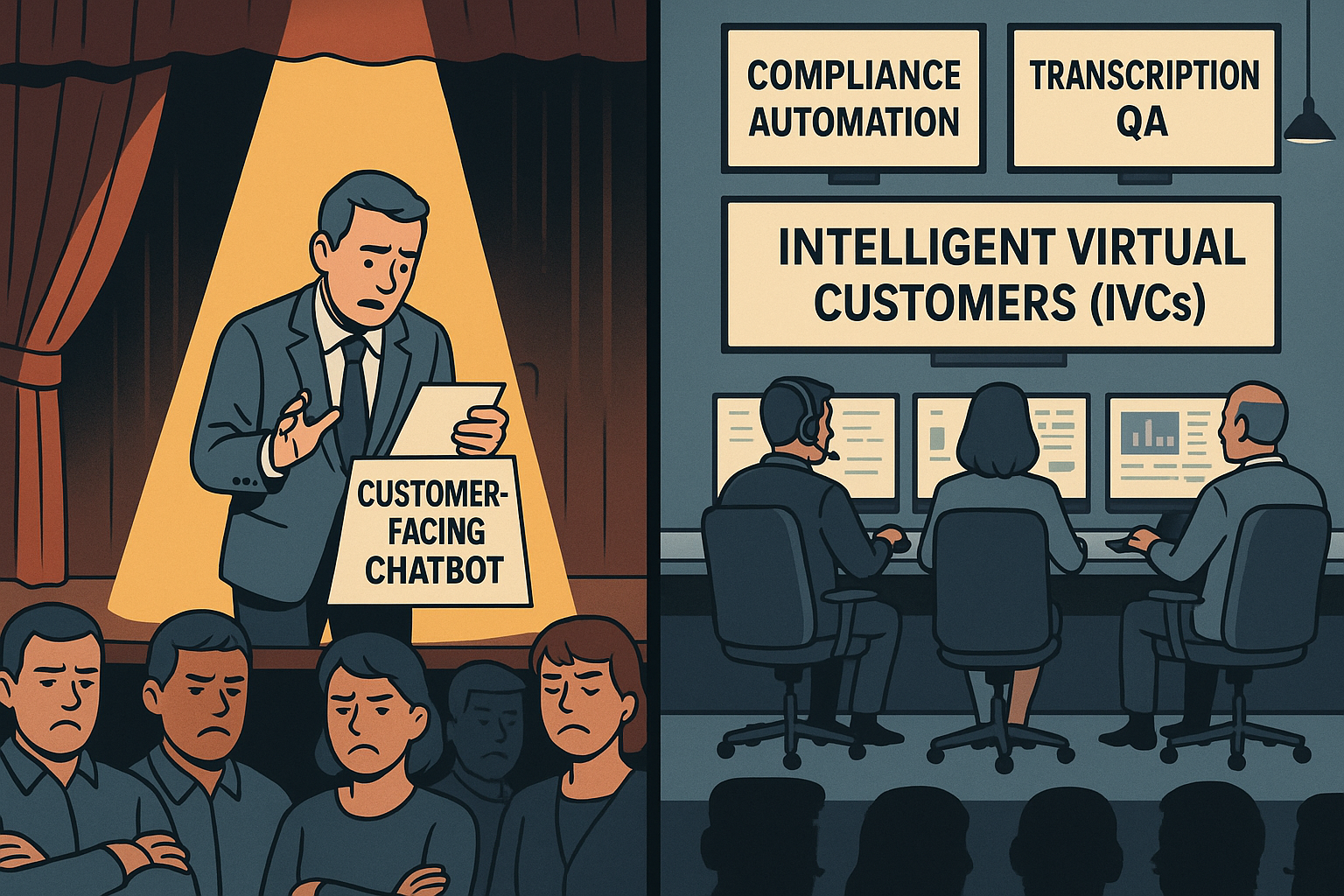

4. Misplaced Bets

Finally, there’s the temptation to swing for the fences. Leaders want big, customer-facing wins—chatbots that deflect thousands of calls, or voice AI that handles entire conversations. The problem? These are the riskiest bets. Failures are public, employees lose trust, and customers are quick to share horror stories on social media.

Meanwhile, the boring stuff—back-office automation like compliance checks, call routing optimization, or transcript QA—quietly delivers reliable ROI. But because it’s less flashy, it often gets overlooked until budgets are burned and credibility is gone.

The Pattern

Call center AI projects don’t fail because the technology isn’t ready. They fail because organizations underestimate the cultural lift, overcomplicate the rollout, and bet on the wrong projects.

Until those fundamentals are addressed, AI will remain a boardroom talking point instead of a bottom-line driver.

Solutions: How to Avoid Being in the 95%

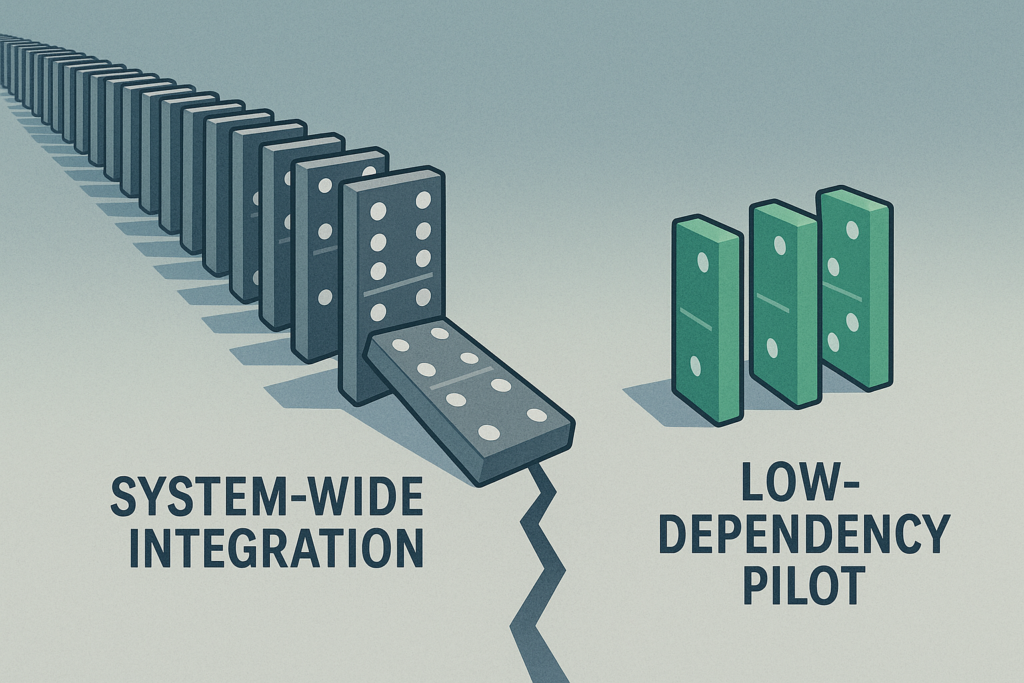

1. Reduce Variables: Start Small, Not System-Wide

Simplify integration—launch where dependencies are low. The biggest AI failures are not due to the technology; they’re due to how organizations deploy it. Pulling off an enterprise-wide automation without ironing out integration and infrastructure first is a high-risk move guaranteed to detonate mid-flight.

A recent TechRadar Pro analysis labels this the “last-mile problem,” where grand digital transformation plans derail when hitting legacy systems, tangled data governance, and real-world constraints.

The lesson: “implementation is strategy”—not just choosing the tech, but ensuring it works in practice.

Similarly, Gartner reports that a whopping 77% of engineering leaders say integrating AI into existing applications remains a major challenge, and advises selecting platforms with cohesive ecosystems rather than patching together disparate tools.

Where to start: low-dependency, high-ROI projects

- Call Routing Automation

Use AI to intelligently pre-route calls based on simple metadata (region, priority, agent skill set), which often requires minimal CRM integration but delivers clear impact on handling times and customer experience. - Workforce Scheduling Support

Implement AI assistants that leverage historical patterns for smarter shift assignments or adherence monitoring—again, typically interacting only with workforce management modules, not full CRM pipelines. - Quality Assurance Automation

Instead of automating agent-facing scripts or customer interactions, choose an internal process—like analyzing call transcripts for compliance or sentiment—that runs independently and delivers immediate insight and ROI.

Select initial projects with low system coupling—components that can run nearly standalone or work within well-defined scopes. These “minimum viable integrations” reduce complexity while proving value in real business terms.

2. Build Employee Buy-In Early

From skepticism to empowerment: Make AI feel like a help, not a threat.

Set the Stage with Data

Employee sentiment around AI adoption is fraught with concern. A recent GoTo survey found that 62% of employees believe AI is significantly overhyped, and 86% admit they aren’t using it to its full potential—mainly because they lack confidence in how or where it fits into their day-to-day work.

Meanwhile, a Pew Research Center study shows that only 16% of workers use AI at all, and a staggering 80% do not—highlighting a gap between access and adoption.

These trends reveal a hidden truth: resistance isn’t about stubbornness—it’s about uncertainty.

Focus: Education Before Automation

Instead of positioning AI as a replacement, frame it as a tool that makes agents’ lives easier. Provide contextual training tailored to real workflow scenarios, and walk through how AI can reduce mundane tasks—like auto-sorting inbound calls or flagging compliance breaches—not replace human judgment.

Pilot with Employee Champions

AI adoption spreads best through peer advocacy, not top-down mandates. Identify a group of motivated agents—trusted individuals who are curious and coachable—and involve them early. They act as localized influencers: shaping adoption norms, providing feedback, and demonstrating AI’s value in their own workflows. This grassroots approach builds momentum from the frontline upward.

Build Trust Through Communication

Trust in leadership strongly influences trust in AI. A Harvard Business Review insight underscores that employees are skeptical about AI when they don’t trust the leadership behind it—especially if they feel AI is being used without transparency or benevolent intent.

Open dialogue about AI’s role, limitations, and safety—tracks not just outcomes, but message clarity—makes adoption feel intentional, not imposed.

3. Automate the Back Office First

Minimize risk—let quiet wins build credibility.

“Automate the back office first” may sound like an overused mantra, but it’s popular for a reason: starting where AI has fewer customer-facing risks gives organizations the breathing room to prove ROI without the PR nightmare of a failed chatbot rollout.

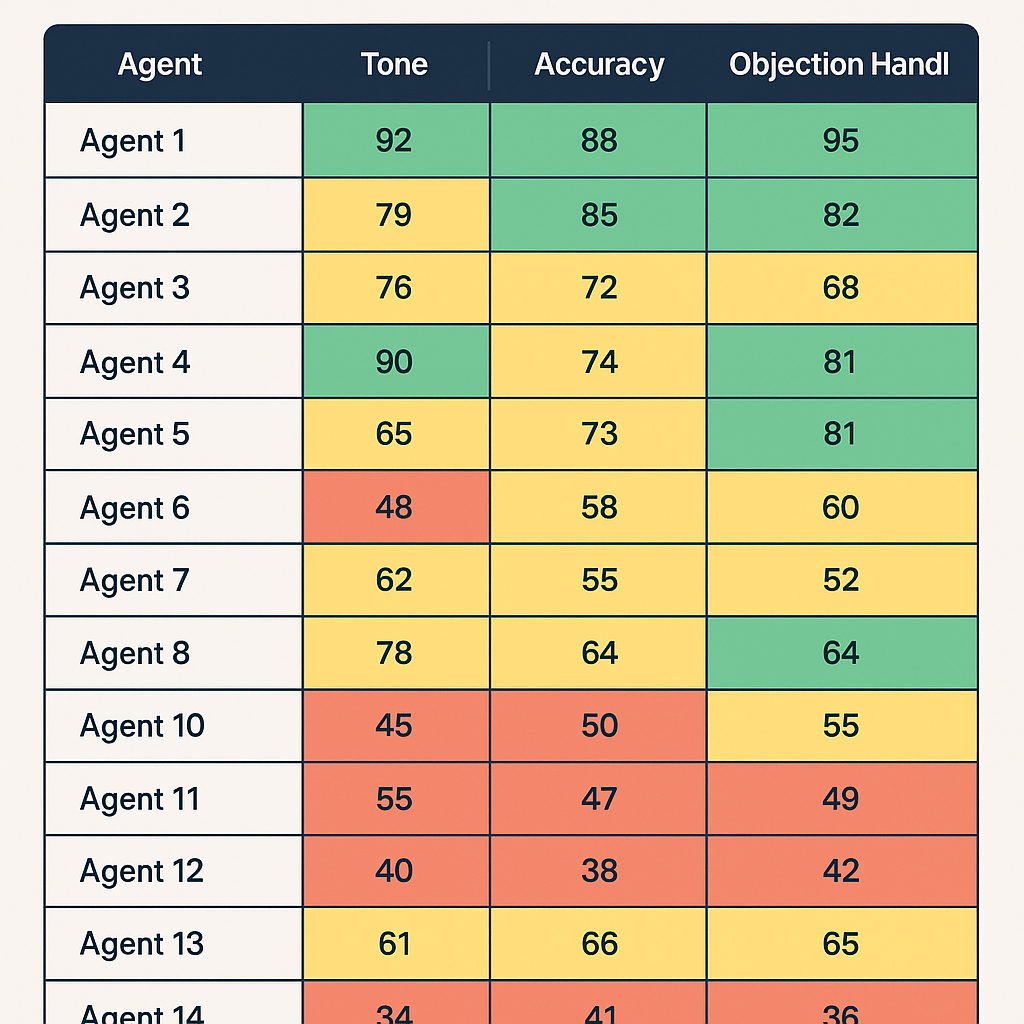

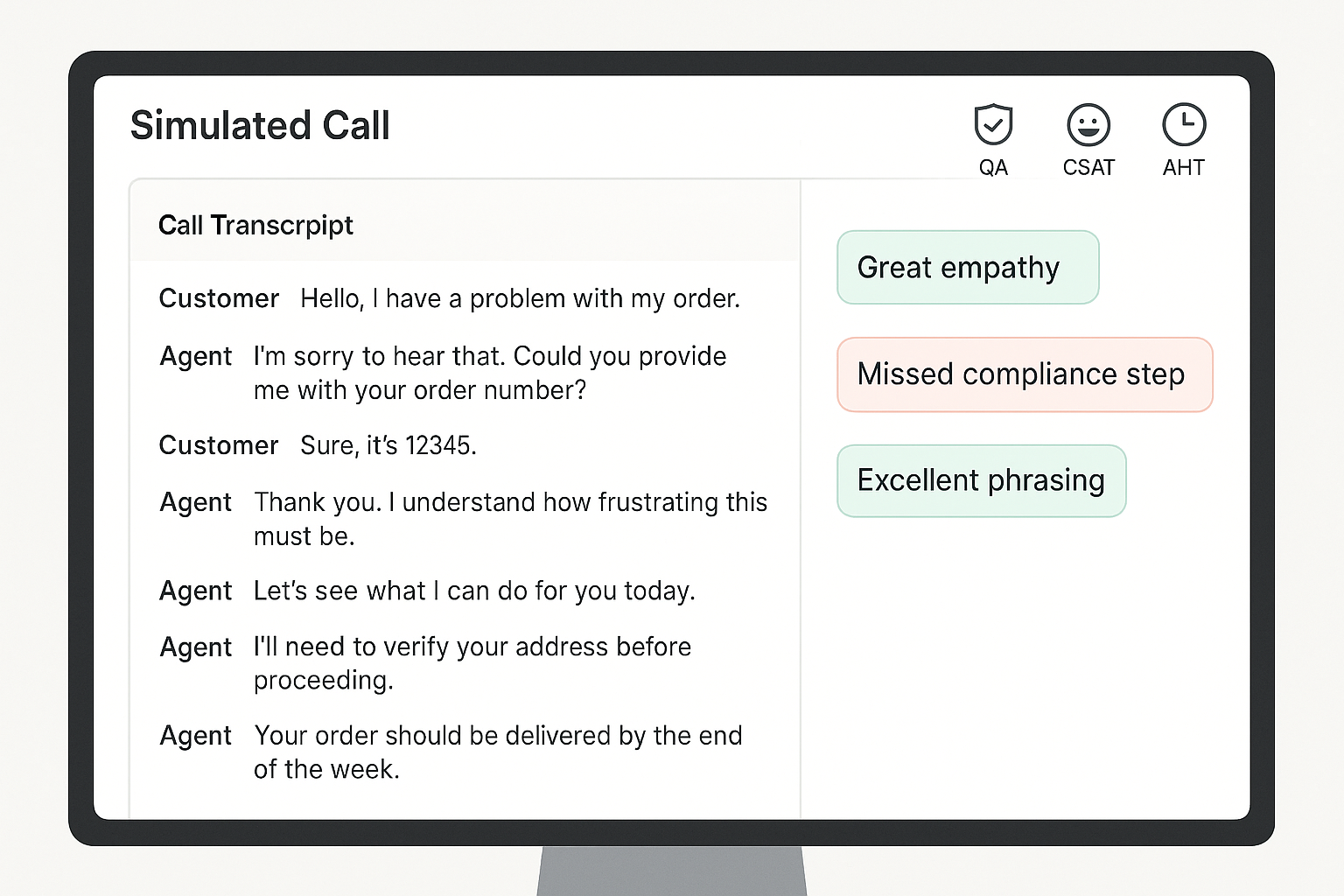

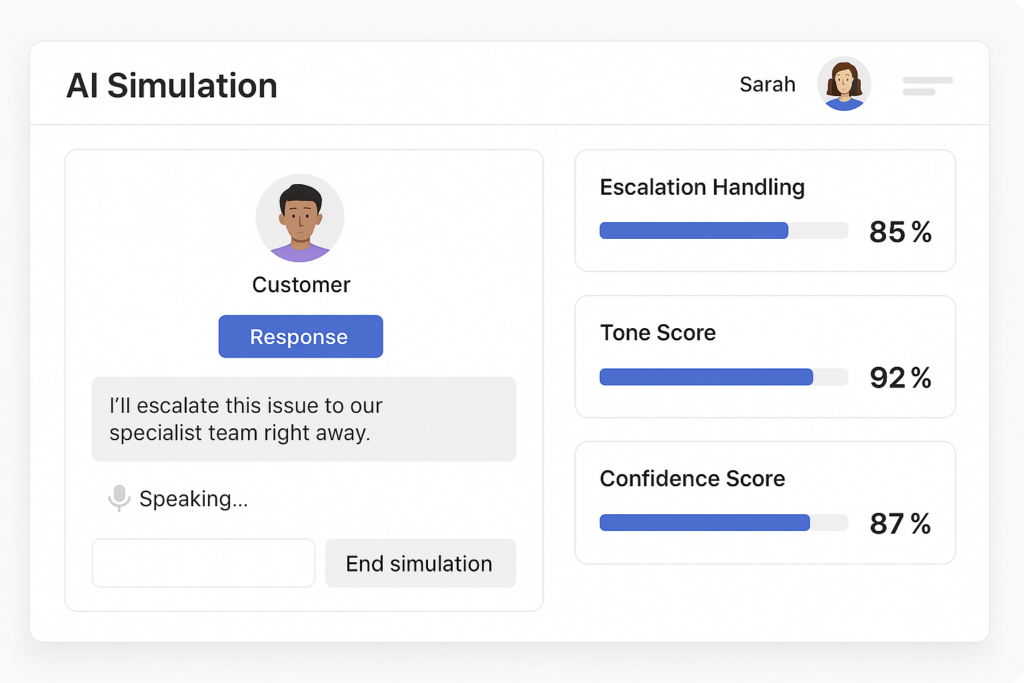

Back-office functions—compliance, transcription QA, performance analytics, and Intelligent Virtual Customers (IVCs)—are ideal launchpads. They’re process-heavy, measurable, and less exposed to the customer’s direct line of sight.

What to Automate First

- Compliance Checks: Automate auditing call transcripts to flag regulatory or policy issues.

- Transcription QA: Use AI to analyze recordings for accuracy, sentiment, or script adherence.

- Performance Analytics: Spot patterns in agent productivity, escalation trends, or customer sentiment shifts.

- Intelligent Virtual Customers (IVCs): Synthetic customers designed to simulate real conversations. Instead of risking failure with live customers, IVCs let you test, train, and refine AI models against realistic scenarios—quietly, safely, and cost-effectively.

Case in Point: Commonwealth Bank’s Cautionary Tale

When Australia’s Commonwealth Bank (CBA) pushed AI voice bots directly into customer service, the outcome was public and painful. Bots failed to resolve issues, call volumes rose, and 45 jobs were cut prematurely before the bank had to backpedal amid backlash.

It’s a textbook example of chasing a headline instead of proving AI’s value in safer, internal domains first.

Why It Works

- Low visibility = low risk: Errors happen behind the scenes, not in front of customers.

- Proof of value: Automating “boring but critical” processes shows real, measurable ROI.

- Foundation for scale: Early wins build executive and employee confidence for more ambitious rollouts.

4. Vendor Strategy: Safe Bet vs. Fast Bet

Choosing the right partner can make or break your AI project.

Option 1: Incumbent Vendors — The Safe Bet

Large, established vendors (think your existing CRM, workforce management, or cloud providers) come with undeniable advantages: scale, security, and the credibility that reassures your board. They’ve delivered before, and they’ll integrate into your existing tech stack with less friction.

The trade-off? Speed. Big vendors often move slowly, layering AI into their products incrementally. You’ll sacrifice agility for stability—but for some executives, especially those under scrutiny from boards or regulators, that’s the right call.

Option 2: Startups — The Fast Bet

Smaller, specialized vendors often innovate faster. They can spin up pilots in weeks, customize deeply for niche workflows, and push the boundaries of what’s possible with AI.

But there are risks: limited resources, unproven scalability, and the potential for hiccups that frustrate employees or erode credibility with customers. A failed startup partnership can set your AI agenda back years—not because the tech was bad, but because your organization loses confidence.

Vendor Strategy: Safe Bet vs. Fast Bet

| Factor | Incumbent Vendor (Safe Bet) | Startup Vendor (Fast Bet) |

| Speed to Deploy | Slower, incremental rollout | Fast, agile pilots |

| Integration | Strong alignment with existing stack | Flexible, but may require workarounds |

| Credibility with Board | High — proven track record | Mixed — depends on reputation |

| Risk of Failure | Low technical risk, slower ROI | Higher risk of hiccups, potential setbacks |

| Innovation | Steady, but rarely disruptive | Cutting-edge, niche solutions |

| Scalability | Enterprise-grade, reliable | May struggle at large volumes |

| Best Fit When… | Board/regulators demand stability; credibility matters most | Speed and differentiation are critical; appetite for risk is higher |

| Hybrid Strategy | Use for customer-facing or mission-critical AI | Use for back-office pilots and innovation sprints |

The Executive Framework: Choosing Your Path

When deciding between safe and fast, align the choice to your risk appetite and board expectations:

- If credibility matters most: Stick with incumbents. They provide a defensible, low-risk path to AI adoption.

- If speed and differentiation are critical: Partner with startups. Be ready to embrace hiccups as the price of innovation.

- If you want both: Consider a hybrid strategy—pilot with a startup in the back office (low risk, high learning), while aligning your customer-facing roadmap with a trusted incumbent.

Bottom line: There’s no “right” choice, only the choice that fits your strategic posture. The wrong vendor isn’t just a missed opportunity—it can turn your call center into another 95% statistic.

Executive Playbook: Making Call Center AI Work

AI success in call centers isn’t about chasing the flashiest tools. It’s about discipline, focus, and choosing battles you can win. Here’s the checklist every executive should keep in mind before greenlighting the next AI project:

✅ Tie Every Pilot to Measurable ROI

If you can’t connect the project to the P&L, don’t start it. Define success upfront in hard metrics: reduced handle time, lower attrition, higher CSAT, or compliance cost savings. Every pilot should answer the board’s question: “What business value did this create?”

✅ Pick “Low Surface Area” Projects First

Start where integration is simplest and dependencies are minimal. Call routing, workforce scheduling, and QA automation deliver quick wins without touching every system in the stack. Prove value before attempting system-wide transformations.

✅ Train Employees and Align Incentives

AI doesn’t work if people won’t use it. Invest in education that shows employees how AI helps their workflows, not replaces them. Reward early adopters, celebrate quick wins, and use employee champions to spread momentum.

✅ Prioritize Back-Office Before Customer-Facing

Public-facing AI failures destroy credibility fast. Back-office automation—compliance checks, transcription QA, performance analytics, Intelligent Virtual Customers (IVCs)—delivers ROI quietly while giving you space to refine the technology.

✅ Match Vendor Choice to Risk Appetite

Don’t let vendor selection be an afterthought. If stability and credibility matter most, lean on incumbents. If speed and differentiation are critical, partner with startups. Better yet, build a hybrid strategy: use startups for low-risk pilots, then scale with trusted incumbents.

The Bottom Line

AI projects succeed when leaders treat them as business initiatives, not tech experiments. Anchor every step in ROI, simplify your first moves, bring employees along for the ride, and choose vendors with your strategic posture in mind. Do this, and your call center won’t just avoid being part of the 95%—it will help define the playbook for the 5%.

TLDR; The 5% Opportunity

The numbers may be grim—95% of AI projects fail—but they’re not destiny. For call centers, success isn’t about betting on the flashiest AI or rushing to impress the board with a chatbot demo. It’s about focus, realism, and cultural readiness.

The difference between the 95% that fail and the 5% that succeed isn’t the technology. It’s leadership. Leaders who demand measurable ROI, start small, bring employees along, and place smart vendor bets are already proving AI can make call centers more efficient, resilient, and customer-centric.

As an executive, you don’t have the luxury of treating AI as an experiment. Your job, your team, and your customer experience depend on getting it right. The good news: you can get it right—if you build deliberately, not reactively.

So here’s the call to action: Don’t chase the hype. Build the foundation that makes your call center part of the 5%.